FAST-CAD: A Fairness-Aware Framework for Non-Contact Stroke Diagnosis

Self-supervised multimodal sensing + unified Domain-Adversarial Training and Group-DRO enable equitable, contact-free stroke screening.

AAAI 2026 · Oral

Abstract

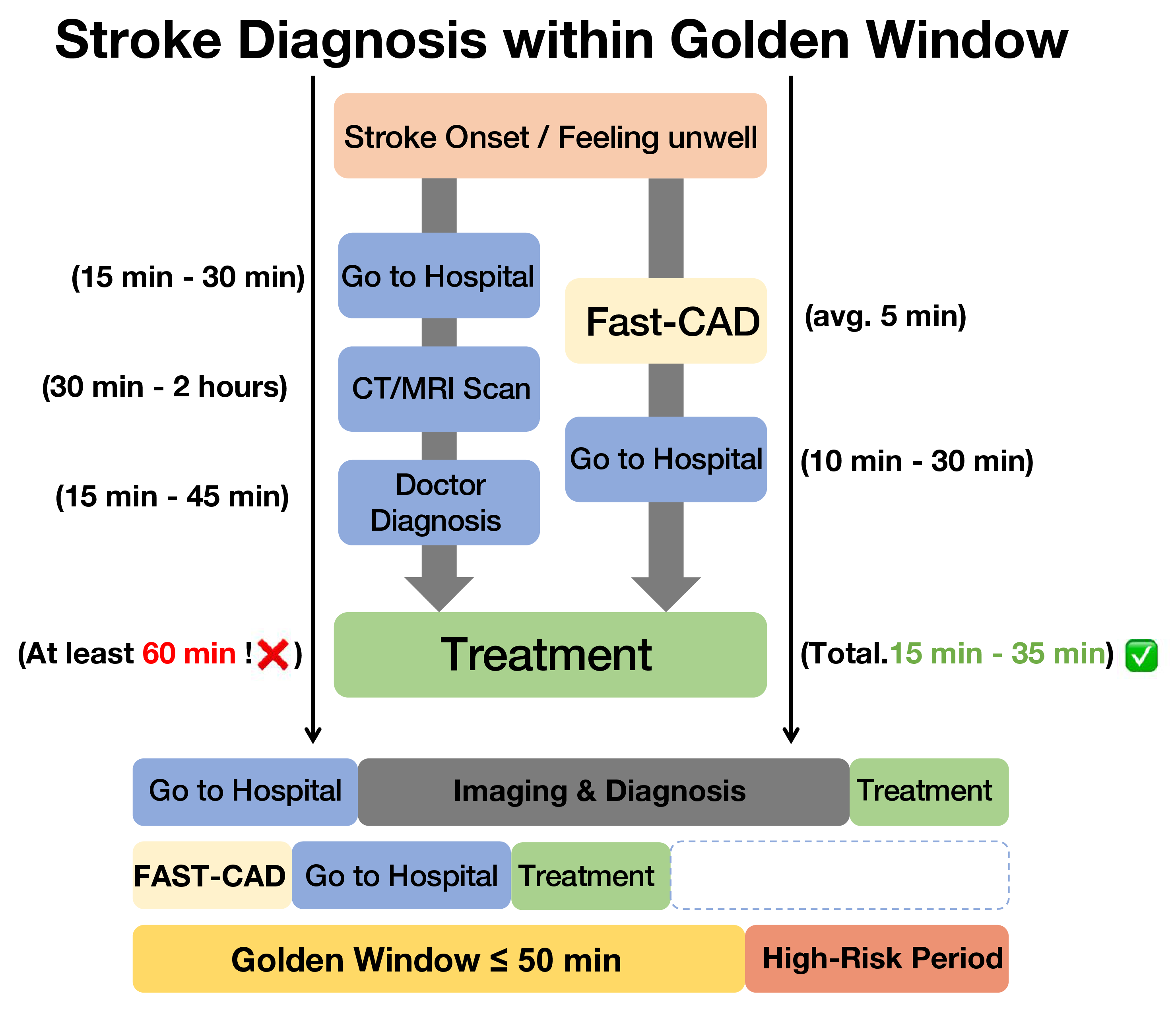

Stroke is an acute cerebrovascular disease, and timely diagnosis significantly improves patient survival. However, existing automated diagnosis methods suffer from fairness issues across demographic groups, potentially exacerbating healthcare disparities. We address this challenge with FAST-CAD, a theoretically grounded framework that integrates Domain-Adversarial Training (DAT) with Group Distributionally Robust Optimization (Group-DRO) for fair and accurate non-contact stroke diagnosis. FAST-CAD leverages domain adaptation theory and minimax fairness to provide convergence guarantees and subgroup risk bounds while learning from a multimodal dataset spanning 12 demographic subgroups defined by age, gender, and posture. Self-supervised encoders paired with adversarial domain discriminators learn demographic-invariant representations, and Group-DRO optimizes worst-group risk. FAST-CAD attains 91.2% AUC with subgroup gaps under 3% while theoretical analysis confirms the effectiveness of the unified DAT + Group-DRO objective, offering practical advances and insights for fair medical AI.

The framework integrates with telemedicine triage to cover the stroke golden window.

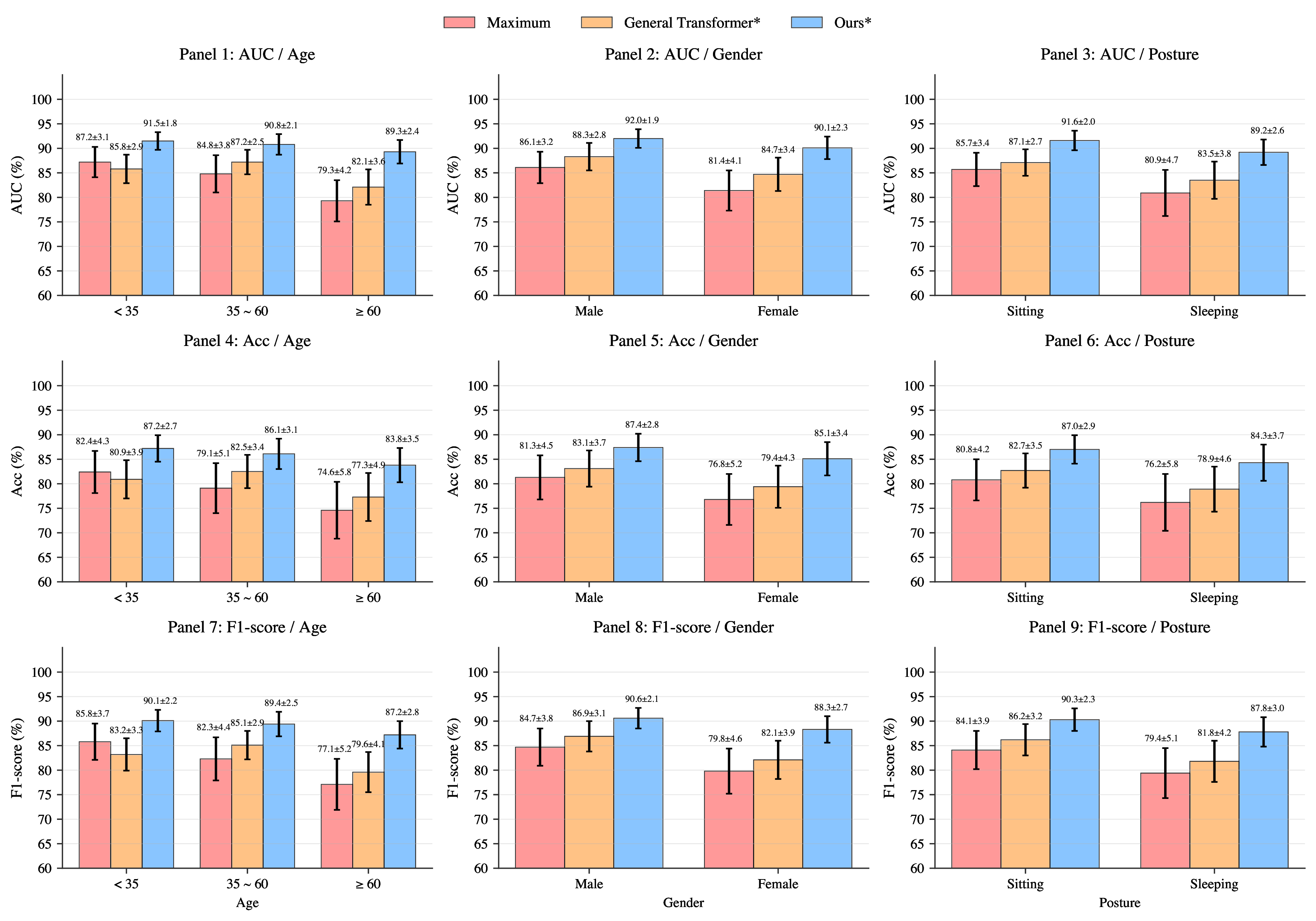

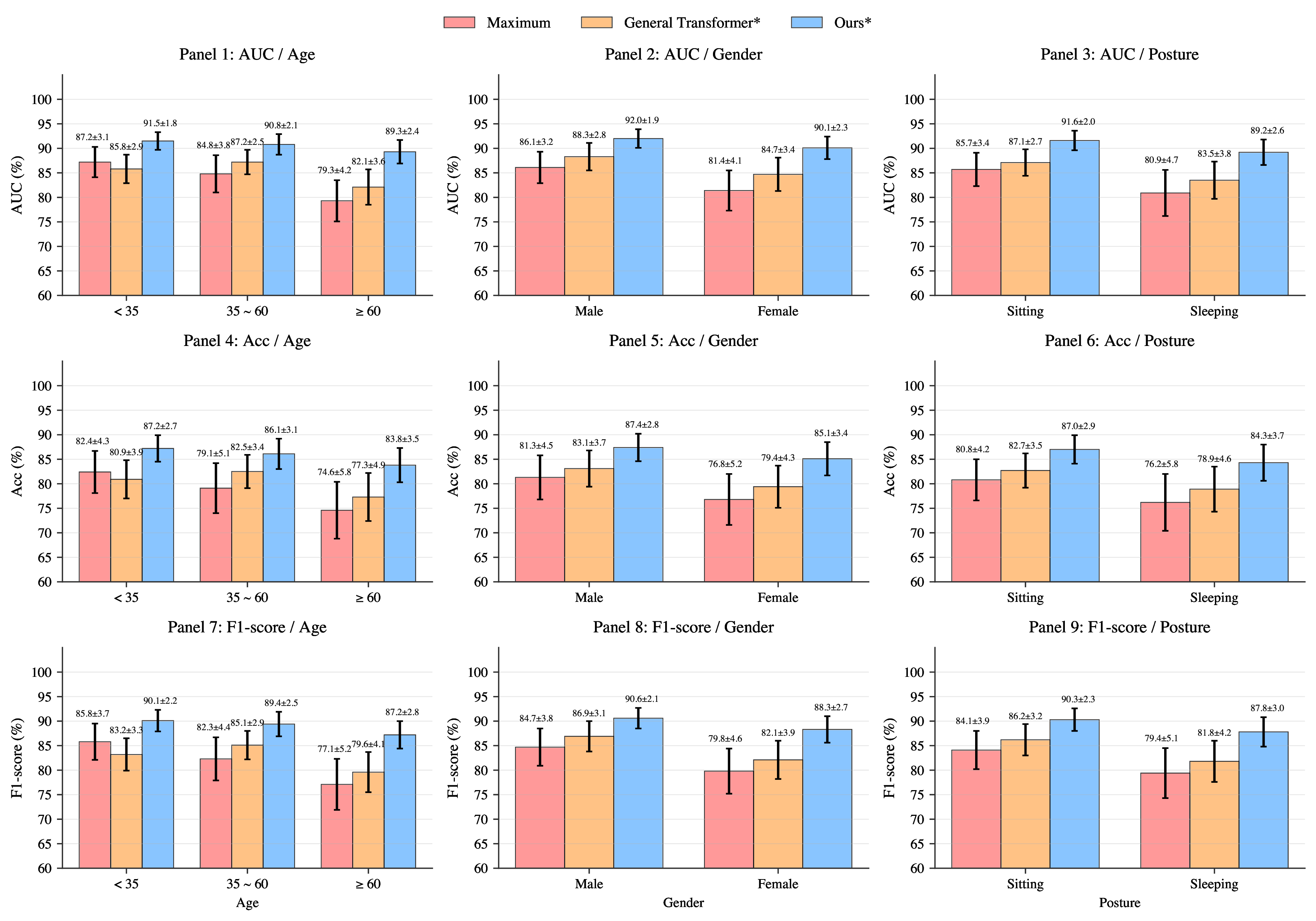

FAST-CAD narrows subgroup gaps and raises worst-group AUCs compared with prior art.

Demographically Stratified Dataset

We curate 243 subjects across 12 demographic combinations (age × gender × posture) with synchronized RGB video, depth, audio, and keypoint annotations. Collection followed medical ethics protocols under clinician supervision, and the 4:1 split with 5-fold cross-validation is aligned with clinical reporting.

- Balanced representation across age brackets (<35, 35-60, >60) and postures (sitting, sleeping).

- Parallel recording of facial asymmetry, tongue motion, arm drift, and speech for richer FAST cues.

- Automatic demographic auditing enables fairness diagnostics and targeted data acquisition.

Cohort Composition

| Category | Subcategory | Count (%) |

|---|---|---|

| Age | < 35 | 65 (26.7) |

| Age | 35-60 | 96 (39.5) |

| Age | > 60 | 82 (33.7) |

| Gender | Male | 145 (59.7) |

| Gender | Female | 98 (40.3) |

| Posture | Sitting | 149 (61.3) |

| Posture | Sleeping | 94 (38.7) |

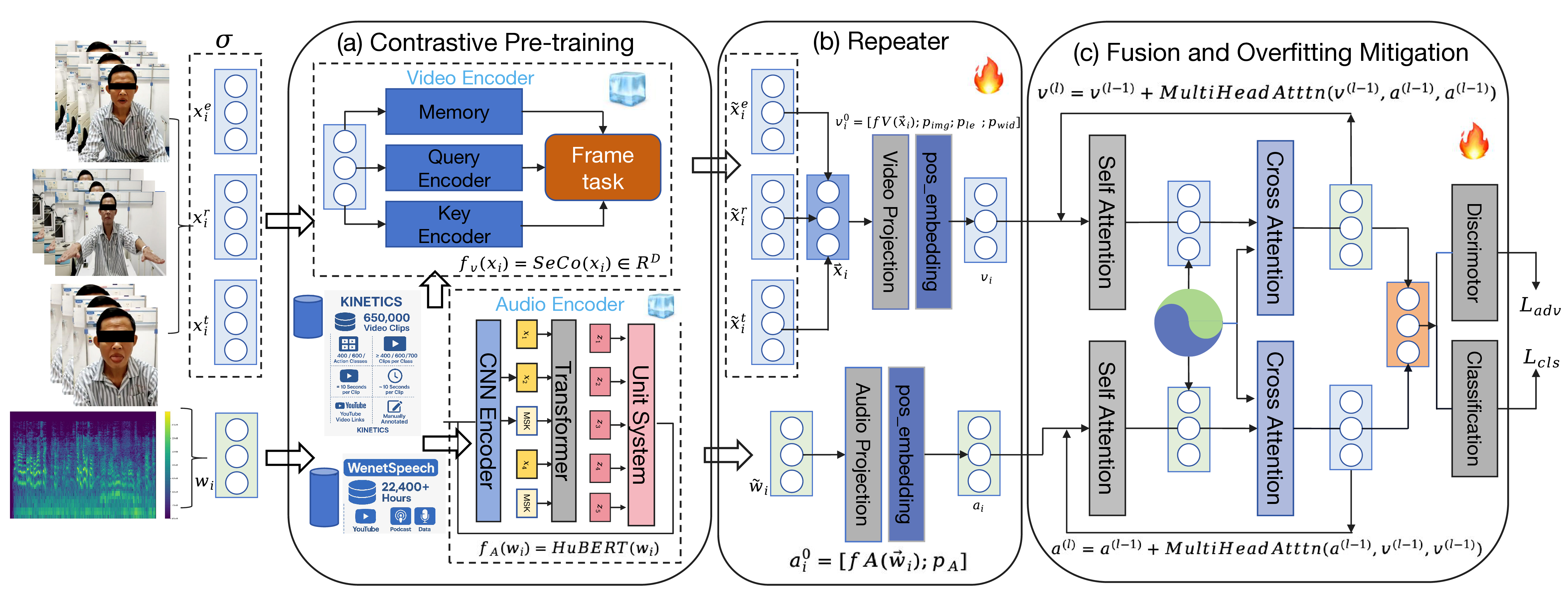

Unified DAT + Group-DRO Training

FAST-CAD alternates between Group-DRO importance updates and demographic adversaries to minimize worst-group risk while enforcing invariance.

- Group reweighting: update importance weights

q_gvia exponentiated gradients to track worst-performing subgroups. - Domain-adversarial objectives: gradient-reversal heads for age, gender, and posture minimize

I(z; a)with empiricald_{H-Delta-H} ~ 0.05. - Fair classification: optimize

L_total = sum_g q_g L_g^{cls} + lambda_adv L_advwith alternating dual-stream fusion that preserves modality balance.

Theoretical analysis yields O(sqrt(log G / T)) convergence and fairness bounds linking worst-group risk to mutual information penalties.

Fairness Diagnostics

Demographic discriminators trained on learned representations drop to random accuracy (age 33.3%, gender/posture 50.0%), validating domain invariance. Group-DRO traces the theoretical O(1/sqrt(T)) slope, while subgroup risk gaps fall below 1.7 percentage points.

Domain Gap

d_{H-Delta-H} plateaus near 0.05, indicating demographic discriminators cannot distinguish age/gender/posture partitions after adaptation, which keeps subgroup decision boundaries aligned.

Mutual Information

Dual-stream fusion with adversarial heads constrains I(z; a) to ≤ 0.02, so no modality leaks demographic cues—an essential precondition for Rawlsian fairness guarantees.

Robust Optimization

Exponentiated-gradient Group-DRO spikes minority weights when their loss rises, steering updates toward worst-group AUC and yielding 3.0% max-min gaps without extra supervision.

Results & Tables

FAST-CAD sets a new operating point for non-contact stroke screening by delivering state-of-the-art accuracy, fairness, and domain robustness.

Comparison with Prior Work

Benchmark Suite| Method | Input | AUC | Acc | F1 | Sens | Spec |

|---|---|---|---|---|---|---|

| I3D | RGB | 68.1 ± 9.7 | 70.9 ± 10.6 | 75.8 ± 9.7 | 65.2 | 73.1 |

| TimeSformer | RGB | 74.4 ± 7.2 | 79.9 ± 6.2 | 85.4 ± 6.3 | 72.3 | 78.1 |

| DeepStroke | Multi | 84.5 ± 5.6 | 76.2 ± 5.9 | 82.3 ± 4.7 | 82.1 | 85.1 |

| VideoMAE | RGB | 81.0 ± 3.2 | 78.2 ± 5.6 | 82.7 ± 4.8 | 78.4 | 82.1 |

| M3Stroke | Multi | 86.3 ± 4.3 | 79.2 ± 3.9 | 84.2 ± 4.2 | 84.1 | 87.2 |

| wav2vec 2.0 | Audio | 63.1 ± 3.7 | 71.6 ± 4.7 | 73.4 ± 6.8 | 59.8 | 75.3 |

| WavLM | Audio | 68.4 ± 3.8 | 72.8 ± 4.3 | 74.9 ± 5.2 | 66.2 | 76.4 |

| Cross-Attention | Multi | 88.6 ± 2.2 | 83.1 ± 4.8 | 87.2 ± 3.4 | 86.4 | 89.1 |

| FAST-CAD | Multi | 91.2 ± 1.5 | 87.2 ± 3.1 | 90.8 ± 2.3 | 89.1 | 92.3 |

Fairness Metrics

Equity Gap| Method | Worst AUC | Delta max-min | Gini |

|---|---|---|---|

| Maximum (MViT) | 75.2% | 8.0% | 0.042 |

| General Transformer* | 81.8% | 5.8% | 0.026 |

| FAST-CAD | 89.5% | 3.0% | 0.011 |

*Feature-concatenation variant built on the same encoders.

Modality Fusion Study

Design Ablation| Modalities | AUC | Delta vs Face | Params |

|---|---|---|---|

| Face | 82.1 ± 5.1 | -- | 28M |

| Face + Tongue | 85.7 ± 4.7 | +3.6 | 38M |

| Face + Tongue + Body | 88.3 ± 2.2 | +6.2 | 45M |

| All Modalities | 91.2 ± 1.5 | +9.1 | 59M |

Cross-Domain Validation

External Cohort| Method | Original AUC | External AUC | Drop |

|---|---|---|---|

| MViT | 78.0% | 65.3% | -12.7% |

| M3Stroke | 86.3% | 71.6% | -14.7% |

| FAST-CAD | 91.2% | 83.7% | -7.5% |

External cohort: 86 participants recorded in home and telemedicine settings.

BibTeX

@misc{sha2025fastcadfairnessawareframeworknoncontact,

title={FAST-CAD: A Fairness-Aware Framework for Non-Contact Stroke Diagnosis},

author={Tianming (Tommy) Sha and Zechuan Chen and Zhan Cheng and Haotian Zhai and Xuwei Ding and Keze Wang},

year={2025},

eprint={2511.08887},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2511.08887}

}